Agentic Feedback Loops: The Hidden Foundation of Autonomous AI

Introduction

When enterprises envision agentic AI, the narrative typically centres on one compelling outcome: full autonomy. Agents that resolve tickets without escalation, rewrite pricing without approval, trigger refunds without review. The promise is undeniable. Yet organisations pursuing this path often discover an uncomfortable truth, autonomy introduced prematurely becomes the fastest route to eroded trust.

McKinsey's research reveals a critical insight: "Organizations that win will pair a clear strategy with tight feedback loops and disciplined governance, turning novelty into measurable value."

Yet more than three-quarters of companies deploying generative AI report no material earnings impact. The disconnect is instructive. Most enterprises activate autonomy before establishing the intelligence systems required to learn from it.

"Without visibility into how agents behave, why they fail, and where they succeed, autonomy becomes reckless. With it, autonomy becomes an evidence-based progression."

The Three Feedback Loops Your Product Team Cannot Afford to Skip

1. The User-Agent Loop: Building Signals Instead of Guessing

Nebuly captures the adoption barrier elegantly: "Agentic AI adoption won't scale without user trust, and user trust depends on feedback." This seems obvious until you examine how most enterprises instrument their agent deployments.

Consider what happens in practice. An agent makes a suggestion. A user approves it. The system logs "task completed" and moves forward. But what about the moments when users silently disable the agent, never filing a complaint? What about the suggestions they reject without explanation, or the edge cases where the agent's reasoning confuses rather than clarifies?

These are not data gaps. They are decision hazards.

Product teams need three capabilities at minimum:

Explicit feedback channels: Users must have accessible, frictionless ways to signal disagreement. "This suggestion was inaccurate." "This action moved too far." "This decision was exactly right." Each response teaches the agent and the team.

Interpretable reasoning: When an agent recommends an action, can it explain itself in plain language? Not in embeddings or probability scores, but in business logic a product manager can audit. If your agent cannot articulate its reasoning, your team cannot learn from its failures.

Silent-churn detection: The most dangerous signal is the absence of signal. When users quietly turn off an agent without complaint, your system should notice. This is where feedback intelligence transforms from nice-to-have into strategic necessity, capturing the behavioural indicators that predict disengagement before it hardens into permanent distrust.

Without these loops, you optimise for completion metrics while missing the user experience erosion happening beneath the surface.

2. The Agent-Product Team Loop: From Opacity to Insight

Salesforce emphasises a principle many organizations understand but few execute: "Build clear decision frameworks and establish feedback loops that show impact. Otherwise, you'll lose trust in both the technology and leadership's commitment to making it work."

Translated to operational terms, this means your product team needs continuous visibility into agent performance across dimensions that matter:

Reversal patterns: Which actions do humans most frequently override? If an agent suggests pricing adjustments but humans reverse 40% of them, that's not a data quality problem, it's a signal that your safety thresholds are misaligned with business risk appetite. Each reversal pattern reveals where the agent's model of the business diverges from reality.

Escalation hotspots: Where does your agent most often defer to humans? This is not failure. It is your agent correctly recognizing its own uncertainty. But patterns in escalations tell you where your training data is weakest, where your workflows are most ambiguous, or where human judgment is genuinely irreplaceable.

Workflow acceptance profiles: Some agent workflows will show high approval rates, minimal edits, and zero policy violations. Others will show the opposite. The difference is not random. It reflects whether the agent has learned the underlying business logic of that workflow. These workflows become your templates for safe expansion.

This visibility transforms autonomy decisions from hunches into calibrated choices. Promoting an agent from "suggest and wait for approval" to "execute and notify" becomes an evidence-based progression rather than a leap of faith.

3. The Business Outcome-Autonomy Loop: Treating Autonomy as a Ladder, Not a Switch

Here lies the most common misconception: that agentic AI operates as a binary. It is on, or it is off. In reality, it is a ladder, and each rung must correspond to specific business and safety metrics.

Before expanding agent autonomy, product leaders must ask themselves uncomfortable questions:

At what acceptance rate and error profile are we genuinely comfortable with autonomous execution? If your agent gets it right 92% of the time, is that sufficient for customer refunds? For pricing decisions? For contract terms? The answer depends on your business model, your risk tolerance, and the cost of errors. There is no universal threshold. But every organization must define its own.

Which decisions must remain human-led regardless of model performance? Certain decisions carry strategic weight, regulatory implications, or relationship risk that no confidence score can justify automating. Identifying these upfront prevents the scenario where your model performs perfectly but your business suffers.

What does success look like at each autonomy level? Define the metrics before you deploy. At 100% assisted suggestions, success might mean adoption rate. At 50% autonomous execution, it might mean reversal rate and user satisfaction. At 100% autonomous execution, it might mean business impact and zero policy violations.

Enterprises that win with agents adopt a simple discipline: every autonomous decision is a hypothesis. Every outcome is data. This mindset prevents autonomy from becoming cargo-cult AI, impressive to executives, risky to stakeholders.

The Competitive Advantage You Cannot See Until You Need It

The organisations that will dominate the agentic AI era will not be those with the most advanced models or the boldest automation demos. They will be the ones with the clearest mechanisms to listen, learn, and adjust at speed.

This requires more than dashboards. It requires feedback intelligence; systems designed to surface what matters, to capture signals others miss, and to transform operational data into strategic insight.

Your feedback loops must be sharper than your models. Build them first. Activate autonomy later.

Key Takeaways

- Autonomy without feedback is risk without mitigation. Define your feedback loops before expanding agent autonomy.

- User silence is the most dangerous signal. Explicit feedback, interpretable reasoning, and churn detection together create trust that survives mistakes.

- Reversals, escalations, and acceptance patterns are your roadmap. These metrics reveal exactly where your agent is ready to scale and where it needs guardrails.

- Autonomy is a ladder, not a switch. Each rung should be tied to business and safety metrics, with clear decision frameworks before you climb.

Learn how leading teams turn feedback into actionLearn How Leading Teams Turn Feedback Into Action

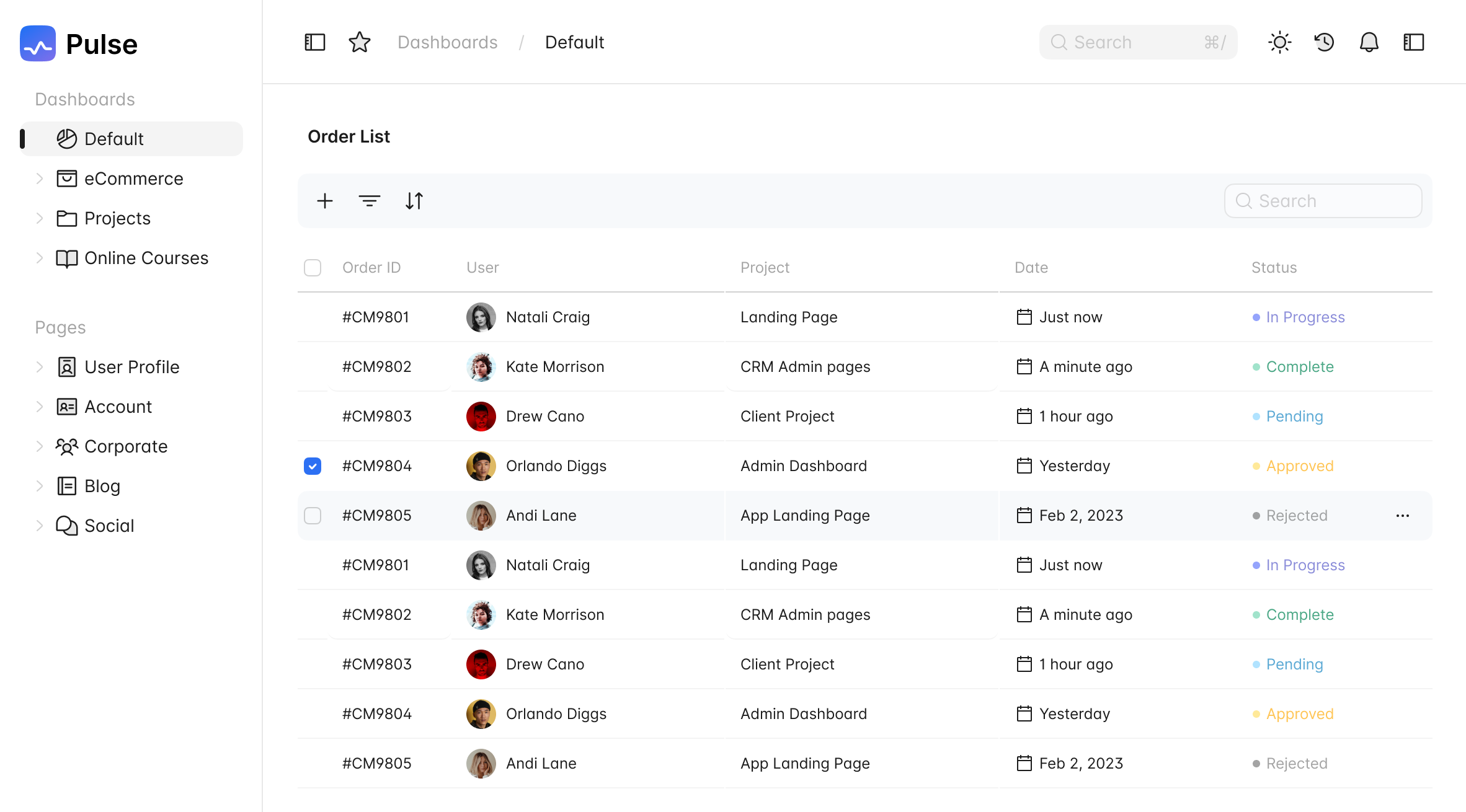

Act: Where Feedback Finally Turns Into Business OutcomesAct: Where Feedback Finally Turns Into Business Outcomes

The gap between insight and execution is where value is lost. Feedback gets analysed, reports get shared, decisions get discussed. And then… nothing happens. Learn how Pulse converts prioritised themes into PRDs, feature briefs, and roadmap items.The gap between insight and execution is where value is lost. Feedback gets analysed, reports get shared, decisions get discussed. And then… nothing happens. Learn how Pulse converts prioritised themes into PRDs, feature briefs, and roadmap items.

22 Apr 202622 Apr 2026

Align: The Hidden System Every Scaling Company NeedsAlign: The Hidden System Every Scaling Company Needs

Misalignment rarely announces itself. It shows up quietly as conflicting priorities and fragmented signals. Learn how Pulse scales alignment with shared intelligence.Misalignment rarely announces itself. It shows up quietly as conflicting priorities and fragmented signals. Learn how Pulse scales alignment with shared intelligence.

15 Apr 202615 Apr 2026

Security & Compliance You Can Trust

Security & Compliance You Can Trust