Can't I Just Build This With an LLM? Why Replicating Pulse Is Much Harder Than It Looks

Introduction

Every week, someone asks us a version of the same question:

"This looks interesting… but couldn't we just build something like this internally using an LLM?"

It's a fair question. On the surface, it feels plausible. Modern AI models can summarise text, detect sentiment, cluster feedback, and generate reports. So why not connect one to your data and build your own feedback intelligence system?

The short answer: because Pulse is not an LLM wrapper. The real answer is more interesting — and far more revealing about how enterprise AI actually works.

The Illusion: If an LLM Can Do It Once, You Can Productise It

The current AI wave has created a widespread misconception:

If an LLM can perform a task once, turning it into a reliable system is easy.

In reality, there's a massive difference between a demo and a production system.

An LLM can:

- Summarise a ticket

- Classify a transcript

- Cluster a dataset

But enterprises don't need isolated answers. They need continuous, reliable, explainable intelligence that runs across millions of signals and ties directly to business outcomes.

That gap — between one-off intelligence and operational intelligence — is where most internal builds fail.

What Looks Like a Prompt Problem Is Actually a Systems Problem

Imagine you try building a solution internally.

You connect:

- Support tickets

- CRM notes

- Call transcripts

- Slack messages

You ask an LLM:

"Cluster this feedback and tell me what matters."

You'll get an output. Maybe even a good one.

But here's what happens next.

1. Real Enterprise Data Is Messy, Not Model-Ready

Production feedback data is:

- Duplicated

- Incomplete

- Mislabeled

- Contradictory

- Biased

- Multi-lingual

- Context-dependent

Cleaning this isn't a prompt engineering task. It's a data infrastructure + ML pipeline + orchestration problem.

Before Pulse produces any insight, multiple specialised agents run:

- Cleansing agents

- Deduplication agents

- Semantic clustering agents

- Entity resolution systems

- Segmentation models

- Signal weighting logic

Most of this work is invisible. But this layer is the foundation of real intelligence.

2. Raw Insights Without Context Are Misleading

An LLM might tell you:

"Users complain about login issues."

But a product or CS leader actually needs:

"Enterprise customers using SSO in APAC show elevated churn risk due to authentication failures impacting high-value accounts."

That requires:

- Business metadata mapping

- Revenue linkage

- Segmentation intelligence

- Account hierarchy awareness

- Temporal trend analysis

LLMs don't know your business context automatically. Pulse builds a self-learning contextual intelligence layer unique to every organisation.

That contextual layer is one of the hardest systems to build — and the hardest to replicate.

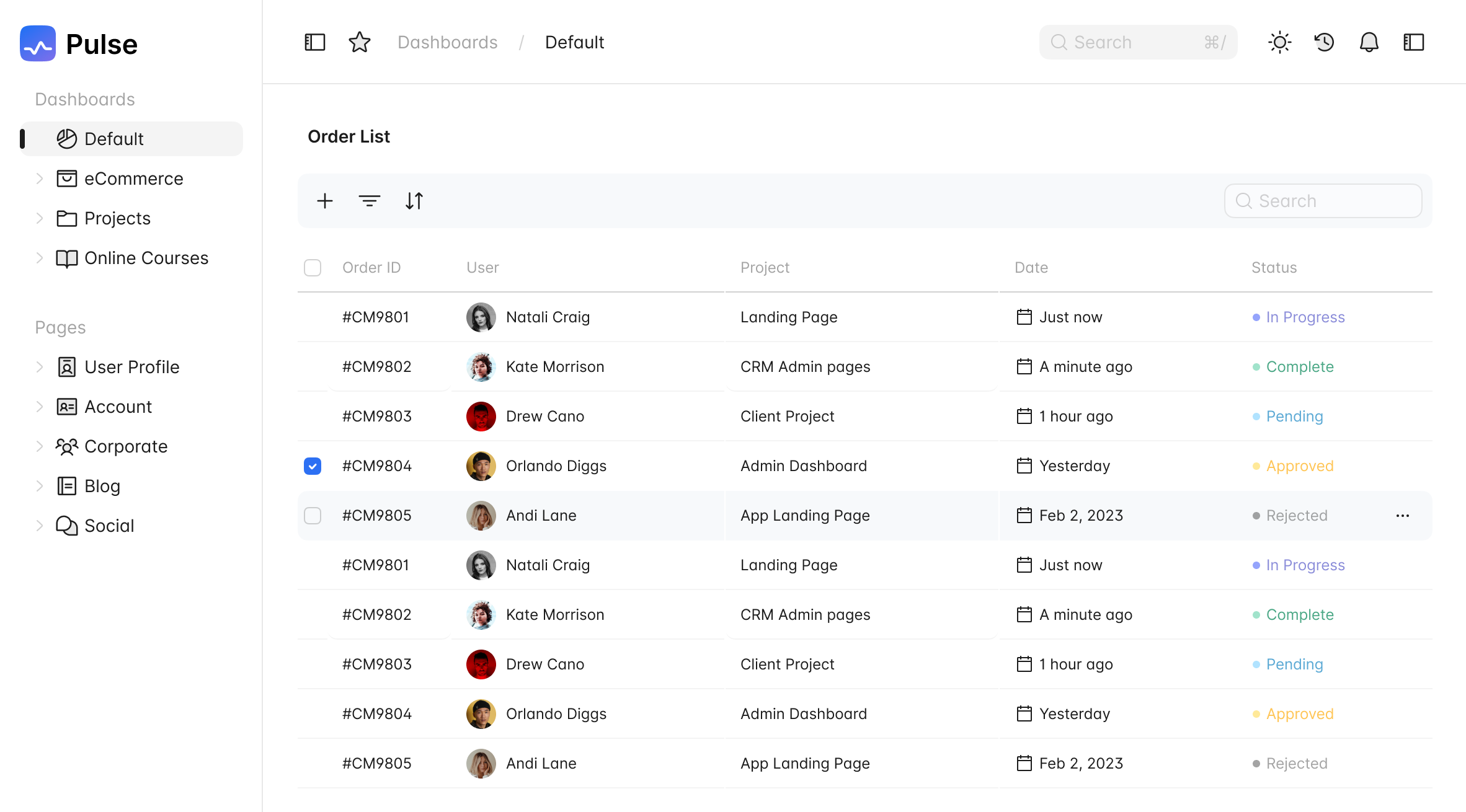

3. Summaries Don't Drive Decisions

Most internal AI builds stop at summaries or dashboards.

But teams don't need summaries. They need decisions.

Real workflows require:

- Prioritised backlogs

- Roadmap-ready items

- Stakeholder-aligned scoring

- Executive-ready narratives

- Execution triggers

This is where DIY solutions stall. Because now you're not building a tool. You're building a decision engine.

Pulse doesn't just analyse feedback. It operationalises it:

- Ranks work by impact

- Generates PRDs

- Prepares leadership briefs

- Syncs with execution tools

- Tracks outcomes

That's not a prompt. That's product architecture.

4. Reliability Is the Real Barrier

Anyone can get an LLM to produce output.

Very few can make it:

- Consistent

- Explainable

- Traceable

- Auditable

- Secure

- Fast

- Cost-efficient

Enterprises don't adopt AI because it's clever. They adopt it because it's dependable.

Pulse ensures every recommendation can be traced back to real signals. Every insight can be explained. Every decision can be justified.

That level of reliability requires engineering depth — not prompt tuning.

5. The Hidden Complexity: Continuous Learning Systems

A prototype answers questions. A platform learns.

Pulse continuously improves by observing:

- Which insights users accept or override

- Which recommendations lead to action

- Which signals correlate with revenue outcomes

- Which patterns repeat across customers

This creates a compounding intelligence advantage.

Static internal builds rarely reach this stage because maintaining learning systems requires dedicated infrastructure and modelling layers.

What Companies Realise After Trying to Build It

Teams that attempt internal versions usually discover something unexpected:

They didn't build a feature. They accidentally started building a product company.

What seemed like:

"Let's connect an LLM to feedback"

turns into:

- Pipeline orchestration

- Prompt versioning

- Evaluation frameworks

- Monitoring systems

- Drift detection

- Infrastructure scaling

- Cost optimisation

- Access controls

- Integrations

- Security reviews

At that point, the real question becomes:

"Should our engineers be solving this problem — or our core product problem?"

The Real Differentiator

"Pulse's advantage isn't that it uses AI. Everyone uses AI now. The difference is how intelligence is structured, contextualised, and operationalised into decisions tied to business outcomes. Not AI answers. AI decisions."

A Simple Way to Think About It

Building an LLM prototype is like assembling a racecar engine on a table.

Building Pulse is:

- Designing the car

- Tuning the engine

- Building the track

- Training the driver

- Installing telemetry

- And winning repeatedly

The engine alone isn't the system.

Final Thought

LLMs didn't eliminate the need for platforms. They made platforms more important.

Because as raw intelligence becomes easy to generate, structured intelligence becomes the real competitive advantage.

That's what Pulse is built for.

Pulse, where feedback becomes decisions — and decisions become growth.

Learn how leading teams turn feedback into actionLearn How Leading Teams Turn Feedback Into Action

Act: Where Feedback Finally Turns Into Business OutcomesAct: Where Feedback Finally Turns Into Business Outcomes

The gap between insight and execution is where value is lost. Feedback gets analysed, reports get shared, decisions get discussed. And then… nothing happens. Learn how Pulse converts prioritised themes into PRDs, feature briefs, and roadmap items.The gap between insight and execution is where value is lost. Feedback gets analysed, reports get shared, decisions get discussed. And then… nothing happens. Learn how Pulse converts prioritised themes into PRDs, feature briefs, and roadmap items.

22 Apr 202622 Apr 2026

Align: The Hidden System Every Scaling Company NeedsAlign: The Hidden System Every Scaling Company Needs

Misalignment rarely announces itself. It shows up quietly as conflicting priorities and fragmented signals. Learn how Pulse scales alignment with shared intelligence.Misalignment rarely announces itself. It shows up quietly as conflicting priorities and fragmented signals. Learn how Pulse scales alignment with shared intelligence.

15 Apr 202615 Apr 2026

Security & Compliance You Can Trust

Security & Compliance You Can Trust